BenchMark Report

Evaluating Large Language Models for Access Reviews

Author: Ilay Levinget

Published: January 2026

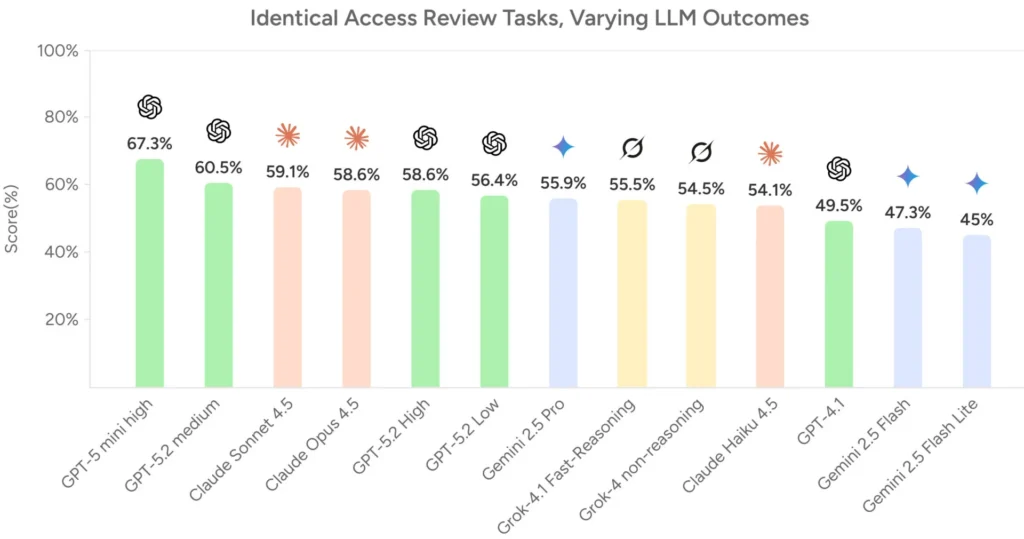

In this paper, we present a real-world benchmark evaluating the ability of modern large language models (LLMs) to perform access review decisions using production-grade enterprise data. Rather than treating access reviews as a simple binary classification task, we evaluate models based on the quality, coherence, and evidentiary strength of their reasoning.

Our results show that frontier LLMs can meet or exceed human-level performance, while also revealing that increased reasoning capacity does not necessarily lead to better outcomes. Finally, we describe the system-level challenges that must be addressed to make these models reliable in practice.

Ready to chat?

"*" indicates required fields

Why Fabrix?

Built for Scale

When identities multiply, most platforms break. Fabrix doesn’t flinch—it tracks all human and non-human identity, so nothing slips, sprawls, or spirals out of control.

Move fast, get it done

Fabrix accelerates compliance review times from months to hours. Get better results with a fraction of the headcount—faster, smarter, and without the burnout.

One Platform, full control

Fabrix breaks down siloes by unifying identity security across SaaS, cloud, and on-prem—no gaps, no handoffs, no fragmented tools.